Stop Generating Boilerplate and Cure Cancer

Oh brother, not yet another post about AI...

One Size Fits None

The dominant AI pitch right now tries to cram every team into one model, force every workflow through one interface, and sell a generalized fix as if context were optional. It sounds efficient, and it sounds scalable. But it erases domain constraints and data realities that determine whether a system works outside a demo.

Market pressure drives this behavior. Teams chase broad claims because broad claims close deals faster than narrow validation. The 2025 AI Index tracks record private investment and rapid adoption, so launch speed keeps beating domain verification.1

The commodity signal is now obvious. Inference costs keep dropping.1 Routing layers make provider switching routine in day-to-day product work.2 I can swap model vendors faster than most teams can run a real safety review, and that tells you exactly where the real bottleneck lives.

It's Statistics in a Trenchcoat

What most people call 'AI' right now is essentially just a slick interface wrapper. I stay fascinated by the machinery underneath it. That layer forces statistical inference, pressure-tests features, and tries to pull signal from noisy and contradictory data.

That is why the messy parts are the interesting parts. In practice, teams wrestle with ingestion drift, patch broken schemas, untangle timestamp conflicts, hunt leakage, and surface segment-level failures that aggregate metrics hide. The actual bottleneck for data teams lives in quiet, systemic failures. Teams trace silent NaNs through vectorized NumPy operations and discover Pandas merges that misalign keys and drop rows without throwing a single error.

In clinical contexts, those failures stop being abstract. A silent schema shift can hide a critical lab value during handoff, and an unexpected type coercion can quietly wipe a patient's history from a timeline. A recent JAMIA review found a scary disconnect: automatic scoring often failed to match what clinicians judged safe or correct.3 That mismatch goes far beyond a minor metrics issue. It's what happens when vendors let models grade their own homework while real people absorb the misses.

Reality is Expensive

Once reality bites back, hiding behind a shiny model gets harder by the week. Capability gaps keep shrinking,1 switching costs keep dropping, and model access has lost its luster in a market where access alone is replaceable.2

That shift forces technical honesty. When access is cheap and interchangeable, teams cannot hide behind demo fluency. They have to prove data quality, calibration, retrieval behavior, and evaluation that survives messy production records. Hybrid design matters because traditional ML and LLM components fail in different ways and need different guardrails.

Research teams already show what that level of honesty looks like. Delphi-2M demonstrates how transformer-scale modeling can track longitudinal disease trajectories.4 Med-PaLM 2 evaluations show where synthesis helps and where workflow limits still bite.5

Rollout Theater

The corporate hype cycle sells manufactured certainty by parading clean dashboards and flawless rollout narratives. Actual scientific rigor strips that away. It forces teams to sit with uncertainty, dig into why systems fail, track where data drifts, and prove whether results generalize.

This is dangerous even before we count the carbon bill. In high-consequence domains, that gap becomes unacceptable. Models can score well in constrained settings and still break when cases become ambiguous and context-heavy.6 Clinical summary evaluation work also shows teams still need robust human standards, even when LLMs assist with scoring and review.7

Responsible teams operationalize skepticism. They track model and data changes, test representative slices, document drift behavior, and trigger fallbacks before failure hits production. FDA PCCP guidance pushes exactly this lifecycle discipline.8

But at What Cost?

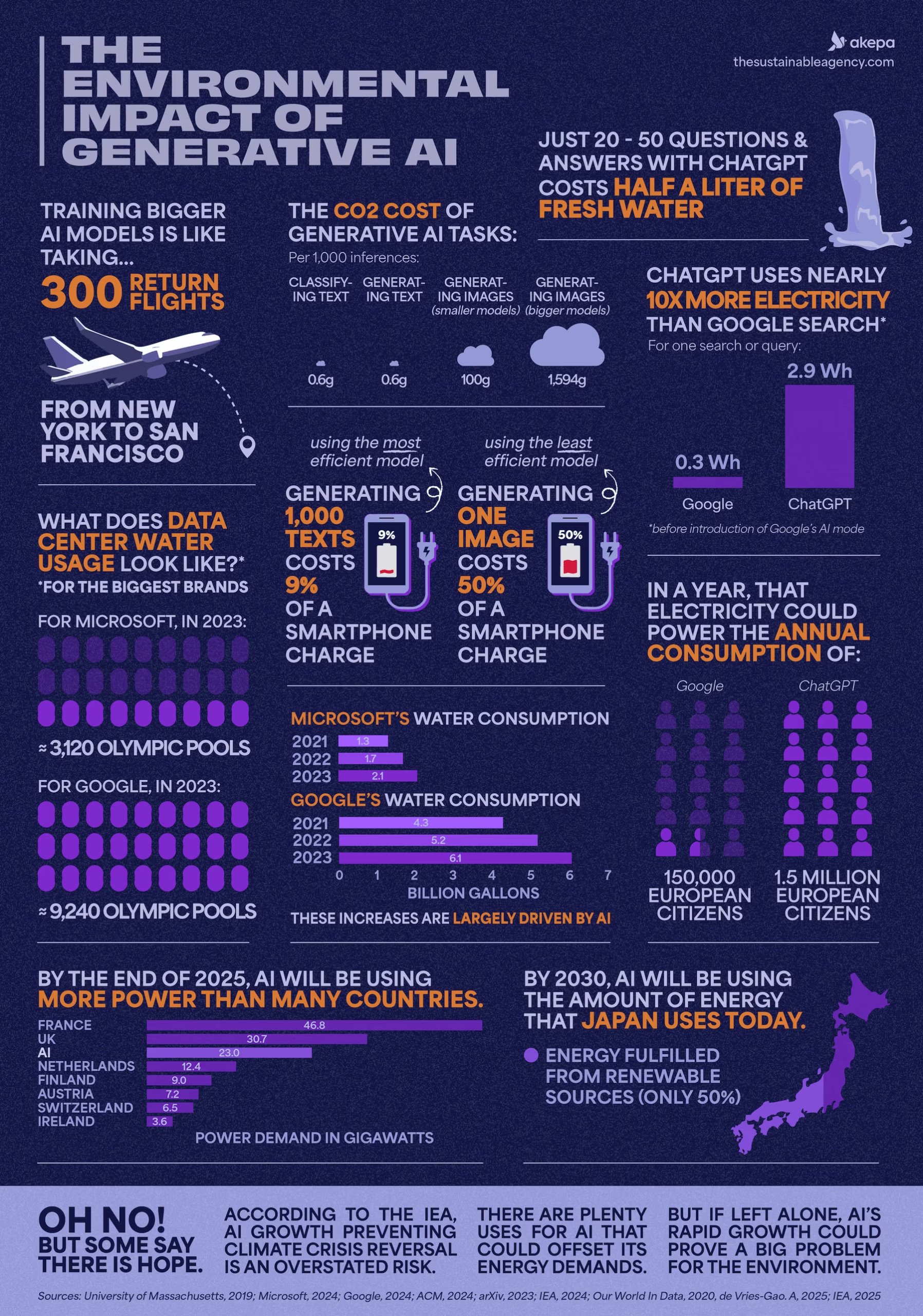

The compute bill is real. A 2026 Patterns analysis estimated AI-related emissions at 32.6 to 79.7 million metric tons CO2e in 2025, putting that range in the same ballpark as New York City's annual emissions.9 A 2026 sustainability explainer translates that benchmark for non-specialists as more than 50 million metric tonnes in one year.10 If we burn that much energy so a model can generate garbage code, endless 8-legged corgies eating ice cream, or generic email copy, the trade is a failure.

Where this technology earns its keep is research infrastructure. ESMO 2025 coverage described synthetic cohorts built from more than 19,000 metastatic breast cancer records, with strong agreement in survival analyses and explicit re-identification checks.11 Dana-Farber's release reports the same program as 19,164 patients and frames it as safer global data-sharing without exposing raw patient records.12 That result comes from hard data architecture work that lets teams share useful cohorts across institutions without leaking raw patient history.

The same pattern shows up in treatment workflows. GE HealthCare's ASTRO 2025 release says early iRT adopters reduced simulation-to-treatment-planning time from seven days to seven minutes in integrated workflows.13 That shift goes far beyond an efficiency metric. It can erase a week of waiting for patients already sitting in treatment anxiety.

Clinical trials are shifting too. A 2025 npj Digital Medicine perspective describes AI-driven eligibility optimization, reinforcement-learning-supported adaptive designs, and digital-twin architectures for faster protocol updates.14 That is the bar. Use heavy compute to compress years of clinical iteration and keep it out of low-stakes boilerplate.

The Bill Comes Due

Cheaper inference multiplies validation work. Lower access costs push model-mediated decisions into more workflows, so each new workflow inherits your data quality debt, monitoring debt, and failure risk.1

Good care and good data science demand the same posture: stare at reality without blinking, track what failed, admit uncertainty under messy conditions,3 and never assume a benchmark score transfers cleanly across high-context scenarios.6

We can keep spending compute on shallow automation, or we can spend it on disease modeling, safer synthetic data sharing, and faster clinical iteration.41114 Only one of those choices justifies the bill.

Healthcare is the proving ground. The same statistical engines parsing messy health records can also model climate anomalies and screen material candidates for better batteries. This tech belongs in expert research workflows with human judgment in command, and I want my hands on those controls.

Footnotes

-

Transformer-scale disease trajectory prediction (Delphi-2M, Nature) ↩ ↩2

-

Med-PaLM 2 long-form medical QA and workflow evaluation (Nature Medicine) ↩

-

Evaluating clinical AI summaries with large language models as judges (PMC) ↩

-

A closer look at AI's supposed climate threat (Patterns, 2026) ↩

-

Environmental Impact of Generative AI | Stats & Facts for 2026 (The Sustainable Agency) ↩

-

How AI is expediting clinical research: the use of synthetic real-world data (ESMO Daily Reporter, 2025) ↩ ↩2

-

Dana-Farber ESMO 2025 release including 19,164-patient synthetic cohort work ↩

-

AI and innovation in clinical trials (npj Digital Medicine, 2025) ↩ ↩2